Remote Hiring Report 2026: What’s Working Now

Key Highlights

To keep candidates engaged and get the desired hiring results, it’s better to have a limited number of tests.

completion rate for 60+ minute tests

out of 1764 loved the test experience

In talent acquisition, the design of the remote hiring pipeline is a strong predictor of time-to-hire, quality of hire and annual retention rates.

But many companies struggle to balance their skill & fit assessments in the hiring process. If these tests are generic, impersonal, repetitive, delayed or less relevant to the job, you risk losing out on high-potential talents.

Our research findings show that prioritising the candidates’ experience & time is more cost- & time-efficient in the long run. By taking a “less is more” approach to assessment design, companies can identify the best candidates for the job opening, while building strong relationships within the candidate pool for the future.

Our Remote Hiring Data

To assess the effectiveness of our remote hiring pipeline, we compiled and analysed candidate data for Q3 & Q4 of 2025.

We have a standard hiring flow for each role, to ensure that all candidates have a consistent experience and an equal chance. This also helped us measure the effectiveness of each stage in the process.

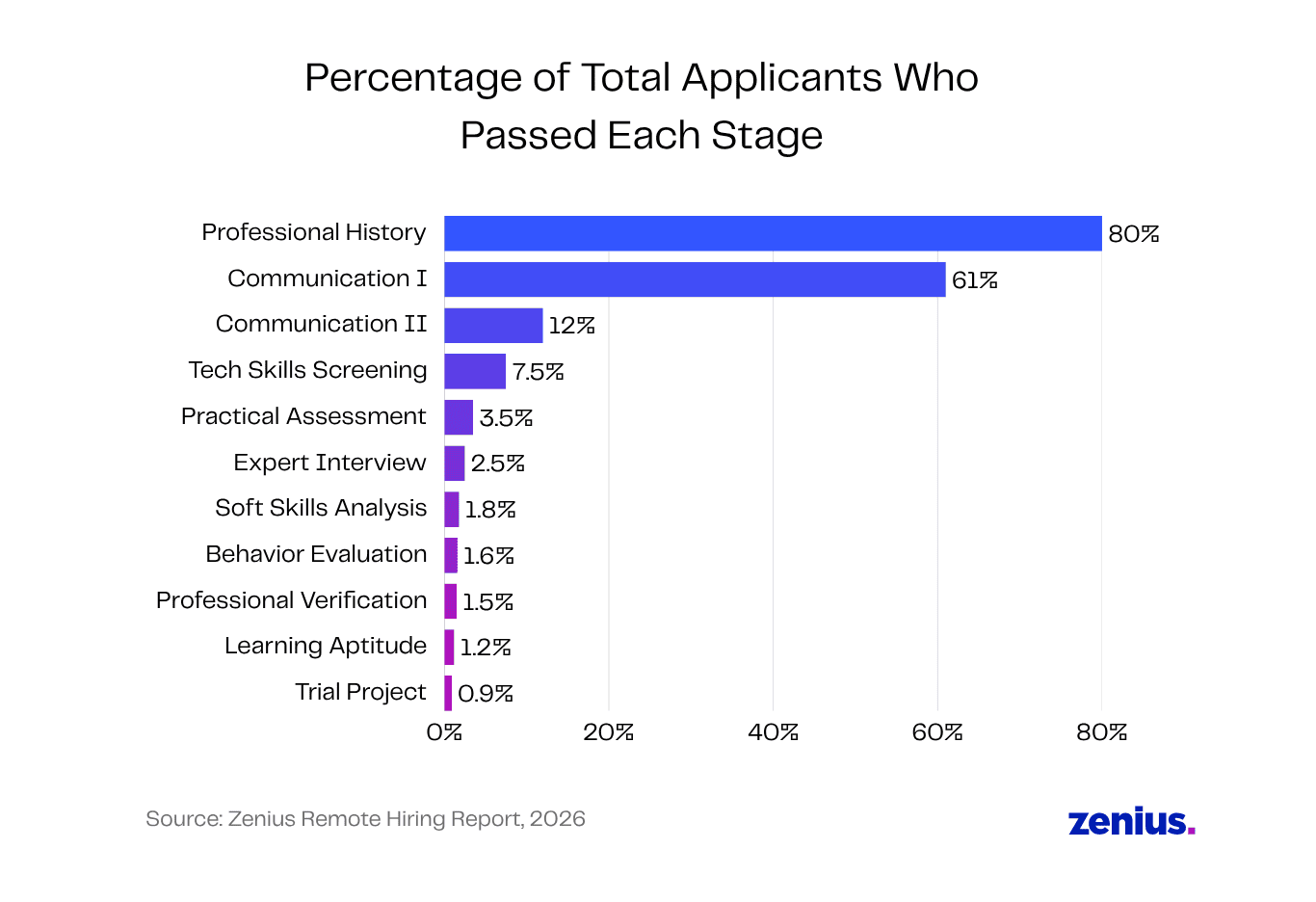

The hiring data below is for the role of entry-level content writer virtual assistants in a marketing operations team. Starting with hundreds of remote applicants, we filled a total of 7 open positions for this team.

- Exp: Entry Level

- Niche: Marketing Ops

- Core Skill: Communication & Writing

- Candidates secured: 7

For mid-level roles that require experience, more candidates tend to get filtered out at the beginning. But as this was for an entry-level position, it wasn’t surprising that 80% of candidates easily passed the professional history check.

Since the content writer role requires deep English fluency, the biggest filter was communication & writing skills. While 61% of candidates passed the initial phone screening (Communication I), only 3.5% of candidates performed well in the practical assessment & CEFR test.

The Expert Interview was another critical stage for this role, as this let us assess candidates’ skills in a controlled environment, without access to any AI writing or editing tools. Only 2.5% of content writer candidates passed this practical interview.

At the end of the process, only 0.9% of total applicants received an offer.

Hiring the Zenius Way: Three Key Takeaways

After closing several job openings, in roles ranging from data entry virtual assistants to AI engineers, here are our three top lessons learned:

- English fluency unlocks other skills.

- Every data point must have a purpose.

- Candidate experience comes first.

In short, our experience can be summarised as “Less is more.” To keep candidates engaged and get the desired hiring results, it’s always better to have a limited number of tests with well-crafted, focused questions.

1. English Fluency Unlocks Other Skills

When it comes to global hiring, solid English skills are the foundation for all other skills. As it’s often the bridge language, English fluency affects a team member’s ability to:

- Collaborate & get aligned with an international team.

- Research & solve problems autonomously.

- Follow complex instructions & execute assigned tasks.

For this reason, Communication I & II are the stages where we disqualify most applicants. Our ideal candidates need to demonstrate excellent reading, writing, speaking and listening skills.

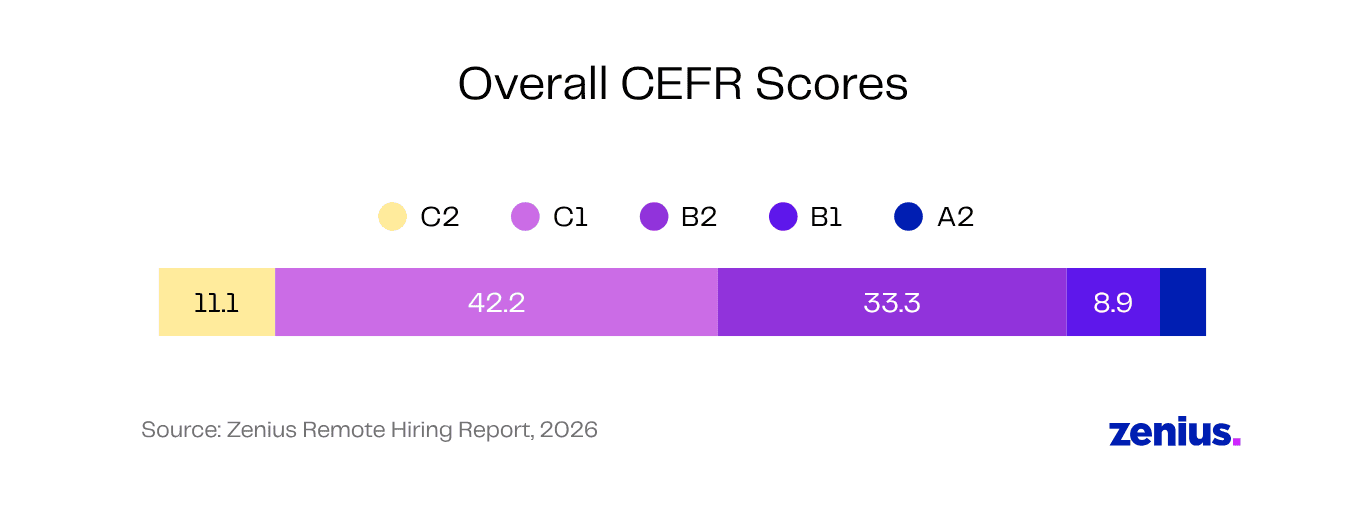

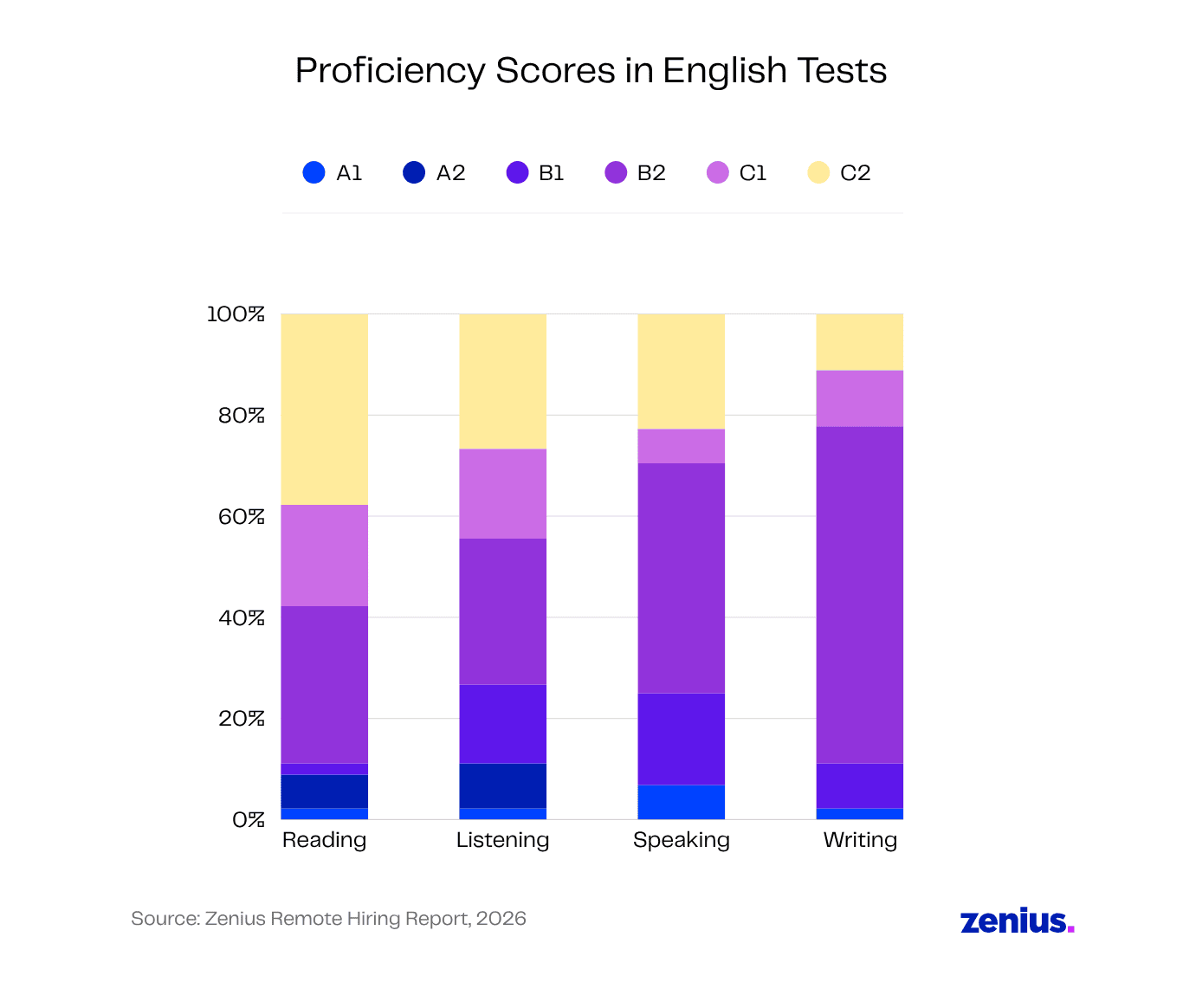

Last year, across multiple roles, only 11.1% of candidates scored C2 overall in English and 42.2% scored C1 overall. Most candidates have better scores in reading & listening, compared to writing or speaking.

Note that depending on the hiring flow for the role, some candidates may have already been disqualified before this step. So this English test data does not reflect the English levels of all applicants.

We don’t just shortlist candidates based on a generic English fluency test. We always test communication skills in a niche context.

For example, we may ask data analyst candidates to suggest improvements in a given SaaS sales dashboard. This tests their written communication, data presentation skills and field-specific vocabulary—all in a single 3-minute question.

The exact English fluency requirements naturally vary from role to role.

For data annotation teams, a lower confidence score in speaking might be acceptable. For highly collaborative roles like UI/UX design, data analyst or HR virtual assistant, the candidate must have strong scores in all areas.

2. Every Data Point Must Have a Purpose

A longer assessment isn’t automatically better.

Our data shows that bloated tests lead to lower completion rates, especially by highly-qualified candidates who feel their time is being wasted.

Having more questions also muddies the water. When each candidate answers thirty questions, it becomes much harder to compare their relative performance or qualifications.

We start with a concrete list of what we want to learn about candidates and how we’re going to measure those skills/attributes in a practical way. Each question should have two or more purposes, so that it reveals more information about candidates without overwhelming them.

This also gives us uniform criteria for scoring assessments, avoiding contrast effect bias or recency bias.

Suppose we’re looking for e-commerce call center VAs who have strong emotional intelligence, good verbal skills in English and good attention to detail. To test these competencies, we could set up an AI call simulation with an angry customer and a given refund policy. Can the candidate navigate the situation smoothly?

This intentional approach has allowed us to create short, practical tests that actually make it easier to rank candidates holistically.

3. Candidate Experience Comes First

In our experience, it’s important to see every step in the hiring flow from the candidate’s perspective.

Before starting with real candidates, our team always does a dry run to catch any confusing emails, contradictory instructions, technical issues or lengthy, irrelevant questions. Such tiny negative experiences can have a big impact on the candidate’s enthusiasm and excitement to work with us.

We also use anonymised session recordings to review candidates’ experience during tests. This has helped us spot candidate cues, like hesitations, boredom, misunderstandings, incomplete answers, time taken, etc. Based on this data, we tweak and refine the assessment further for the next time.

out of 1764 loved the test experience

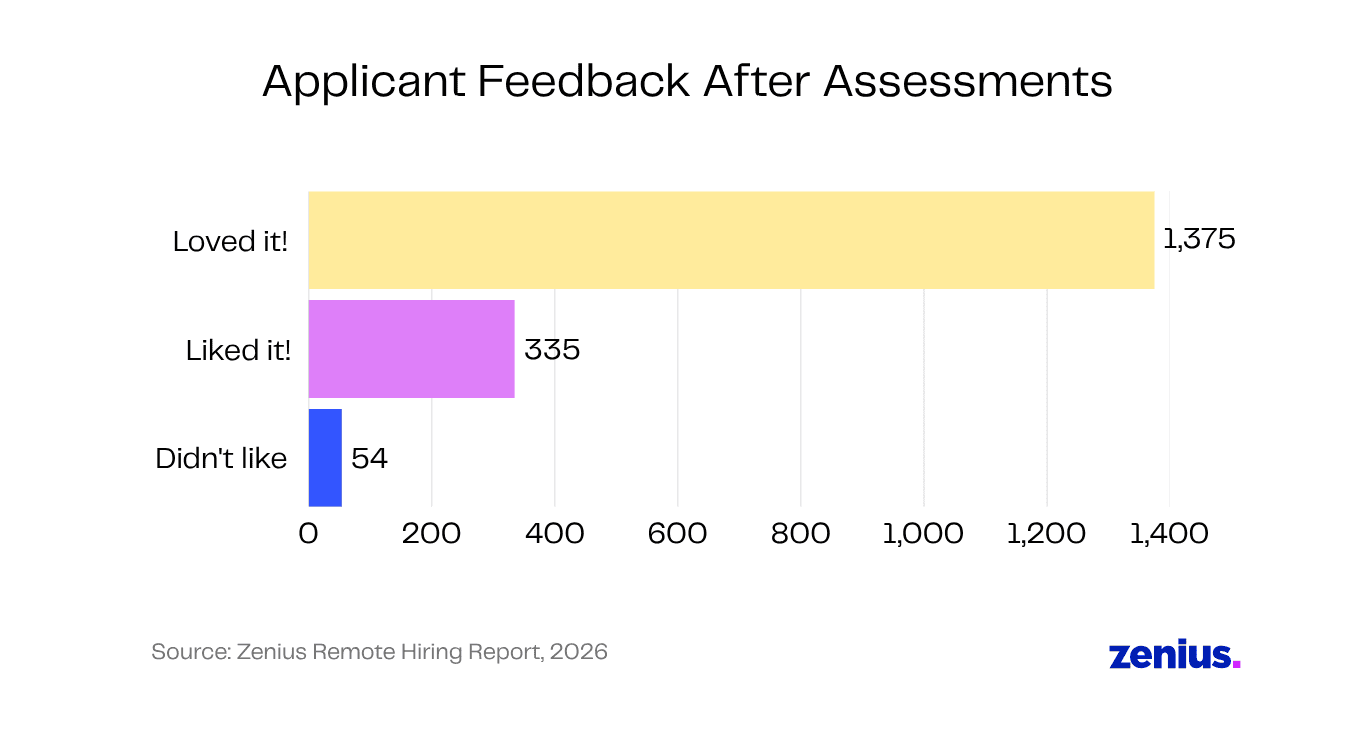

The response to these efforts has been overwhelmingly positive. It’s clear that candidates appreciate well-designed assessments. Out of 1,764 candidates who left post-assessment feedback,

- 1,375 loved the test experience.

- 335 liked the test.

- Only 54 thought it was not good or could be improved.

Here’s just some of the feedback shared by real candidates:

“Very good experience”

“It also reminded me of my college days. It was fun, thanks.”

“Thoroughly engaging and challenging. The questions reflected real job scenarios…”

“The assessment was good and I really enjoyed it during this time.”

“It was my first time giving an assignment like this. it is super cool”

“Was my first time experiencing such kind of job assessment. It was a nice new experience. Questions were good. Although, slightly uncomfortable for an introvert like me to record a video :).”

“Very challenging yet compelling”

“I loved how the assignment was crisp and tailored as an interview. Enjoyed it! Thank you.”

“It was engaging and new to me yet helpful.”

“it was really nice and interactive”

Initially, the research team was concerned that the data was skewed positive because of the nature of the situation. But we saw that candidates didn’t shy away from adding neutral or negative feedback too (mostly about technical bugs or short time limits).

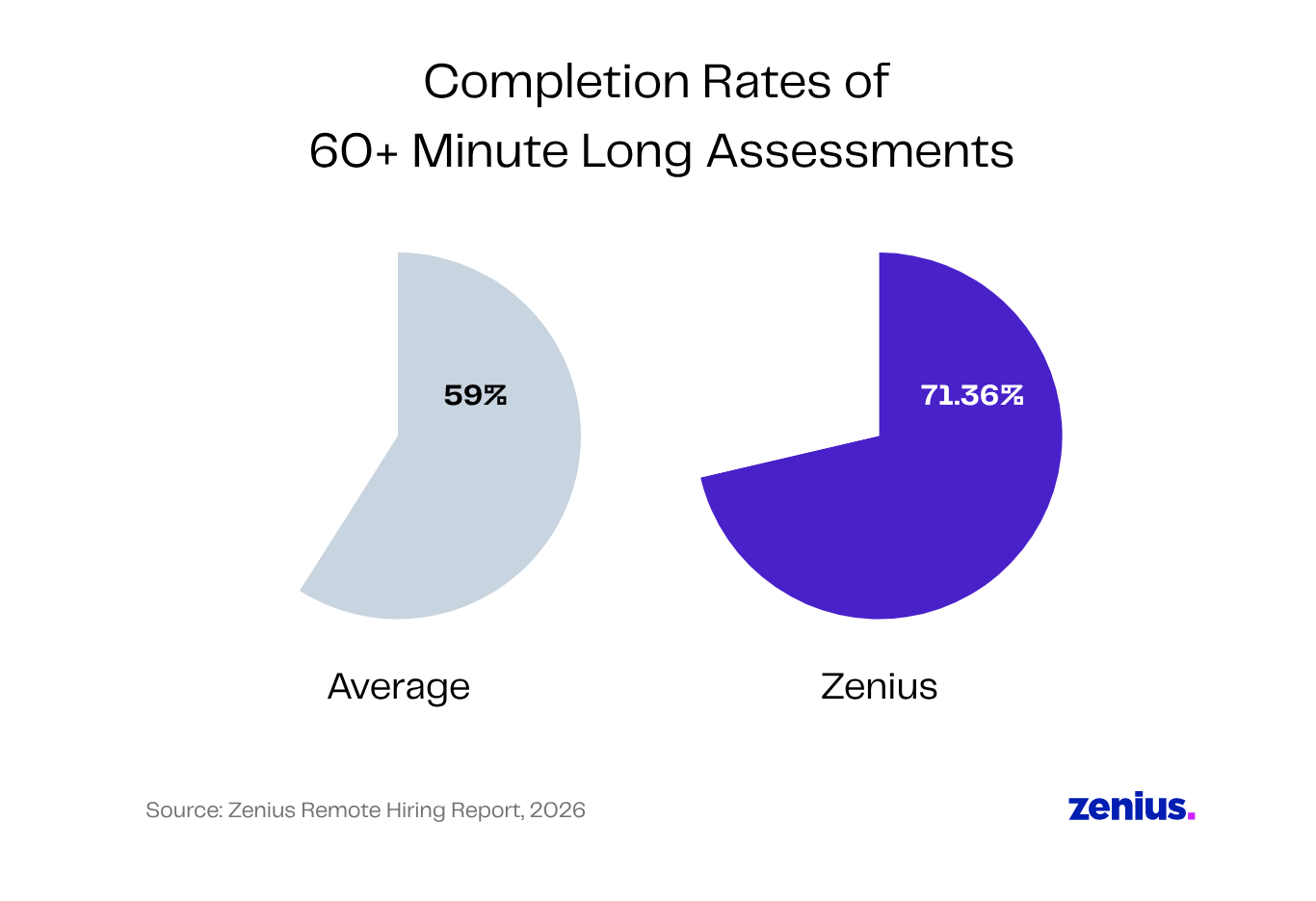

More significantly, the assessment completion rates also corroborate the positive feedback from candidates.

Across the industry, the completion rate for assessments longer than 60 minutes is just 59%. In comparison, at Zenius, a whopping 71.36% of 60+ minute assessments were completed across all roles.

If we focus on only second-round assessments, Zenius’ completion rates jump upwards to 91.8%. Apart from assessment design, this may be because our shortlisted candidates are more invested in landing the job.

Final Thoughts

Our research data revealed that candidates respond positively to creative, focused assessments. The way forward is creating remote hiring workflows that are productive for both employees and job applicants. By linking questions to information that actually matters, companies can streamline assessments while still identifying the top candidates.

Unsure about your own hiring proces? Let us take care of the messy parts of hiring so you can start building your remote team today. Get started now!